What is the hybrid cloud mechanism based on Ceph object storage?

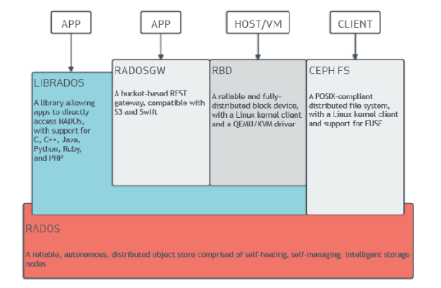

Ceph is already the hottest software-defined storage open source project today. As shown in the following figure, it can provide three kinds of storage interfaces on the same underlying platform, namely file storage, object storage and block storage. The main concern of this paper is object storage, ie radosgw.

Based on Ceph, it is convenient and quick to build a privatized storage platform with good security, high availability and good scalability. Although privatized storage platforms are receiving more and more attention because of their security advantages, privatized storage platforms also have many drawbacks.

For example, in a scenario where a multinational company needs access to business data, how do we support this long-distance data access requirement? If it's just in a privatized environment, there are two solutions: accessing data in the local data center directly across geographies, no doubt, this solution will result in higher access latency, self-built data center, The problem of asynchronously copying local data asynchronously to a remote data center is that the cost is too high.

In this scenario, the pure private cloud storage platform does not solve the above problem well. However, the above requirements are better met by adopting a hybrid cloud solution. For the scenario of remote data access described above, we can completely replicate the data of the local data center to the public cloud by using the public cloud in the remote data center node as the storage point, and then directly access the data in the public cloud through the terminal. This approach has a large advantage in terms of overall cost and speed, and is suitable for such long-distance data access needs.

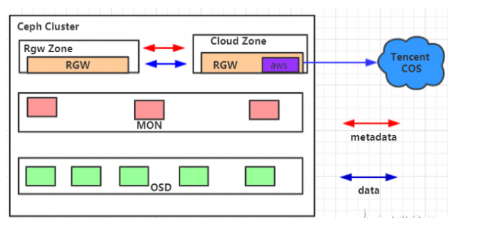

The hybrid cloud mechanism based on Ceph object storage is a good complement to the Ceph ecosystem. Based on this, the community will release the RGW Cloud Sync feature on this version of Mimic, initially supporting the export of data from RGW to a public cloud object storage platform that supports the s3 protocol. RGW Cloud Sync is also a new synchronization plugin (currently called aws sync module) that is compatible with the S3 protocol. The overall development of the RGW Cloud Sync feature is as follows:

Suse contributed the initial release. This version only supports simple uploading. Red Hat supports full semantic support on top of this initial release, such as multipart upload, delete, etc., considering the possibility of memory explosion when synchronizing large files. Streaming uploads have also been implemented for the public cloud synchronization feature that will be released in the M version of the Ceph community.

Among them, Cloud Zone contains a public cloud synchronization plugin, which is configured as a read-only zone. Smoothly synchronize data from RGW to public cloud platform, and support free customization to import data to different cloud paths. At the same time, we have also improved the synchronization status display function, which can quickly detect errors during synchronization and Current backward data, etc.

The RGW Cloud Sync feature is essentially based on a brand new synchronization plugin (aws sync module) on top of MulTIsite. Let's first look at some of the core mechanisms of MulTIsite. MulTIsite is a solution for remote data backup in RGW. In essence, it is a log-based asynchronous replication strategy.

Zone: exists in a separate Ceph cluster, served by a group of rgw, corresponding to a group of background poolZonegroup: contains at least one zone, synchronous data and metadata between zones: a separate namespace, containing at least one Zonegroup, Synchronize metadata between Zonegroups.

Some of the working mechanisms in MulTIsite are Data Sync, Async Framework, and Sync Plugin. The Data Sync part mainly analyzes the data synchronization process in Multisite, the Async Framework part introduces the coroutine framework in Multisite, and the Sync Plugin part introduces some synchronization plugins in Multisite.

Data Sync is used to back up data in a Zonegroup. The data written in one zone will eventually be synchronized to all the zones in the Zonegroup.

Init: Creates a log-segment relationship between the remote source zone and the local zone. The remote datalog is mapped to the local. The datalog is used to know whether there is data to update the Build Full Sync Map: obtain the meta-information of the remote bucket and establish a mapping relationship. To record the synchronization status of the bucket. If the source zone has no data when configuring multisite, this step will skip Data Sync directly: start the synchronization of the object data, obtain the datalog of the source zone through the RGW api and consume the corresponding bilog. Synchronous Data.

If you want to know more, our website has product specifications for memory, you can go to ALLICDATA ELECTRONICS LIMITED to get more information