What is the history of the analog circuit and its future development trend?

The final calculation of the computer is realized by switching the switching state of the digital circuit, including information transmission and device communication. The development of digital circuits was originally made up of vacuum tubes before the 1950s. The diode invented by Fleming, and the DeForest modified vacuum transistor, produced the first general-purpose computer ENIAC (Electronic Numerical Integrator And Compute).

The first generation of electronic circuits were made up of huge glass tubes that were vacuumed, so they were called vacuum tubes. The vacuum tube uses a filament or a pole of a circuit board to emit an electron beam to control the current. However, not all tubes are evacuated, and some gases and smaller tubes use photosensitive materials and magnetic fields to control the flow of electrons. They all have in common: they are expensive, consume a lot of electricity and emit a lot of heat. They are also very unreliable and require a lot of maintenance. And they are large in size, making it difficult to make smaller "computers."

The invention of the transistor originated from the research of Bell Labs, based on finding a component that is cheap, consumes little or no power, and does not heat up. The component must also be easy to manufacture, with fast switching speed and small size. Under the leadership of William Shockley in 1974, John Bardeen and Walter Brattain invented transistors that met these characteristics. The transistor is small in size, low in resistance, has no moving parts (and therefore low losses), and is reliable and hardly generates heat. The invention of transistors has made the study of electronic circuits unprecedentedly active, and new developments in transistor performance, size and reliability occur almost every month.

In the 1950s, Fairchild and Intel co-founder Gordon Moore published a paper stating that the number of components per integrated circuit would double every year for the next decade. In 1975, he reviewed his predictions and said that the number of components is now doubling every two years. This is the famous Moore's Law.

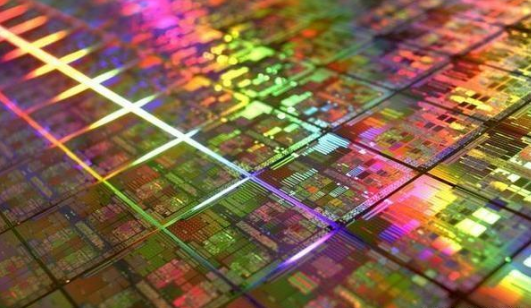

One of the first semiconductor processes in 1971 was 10 microns (or 100,000 times smaller than a meter). By 2001, it was 130 nanometers, nearly 80 times smaller than in 1971. In 2017, the smallest transistor process was 10 nanometers, which is nearly 10,000 times larger than today's transistors.

With the development of large-scale circuits, transistors are getting smaller and smaller, and the number of integrations is increasing in geometry, and the manufacturing process is getting harder and harder. Overcoming these technical and technological barriers requires not only a lot of time and research, but also a lot of money and investment. Therefore, the Moore's Law crisis broke out.

As electronic components become smaller and smaller (nanoscale), quantum properties and effects gradually emerge. As we continue to reduce the size of the transistor, the size of its Pn junction depletion layer is also reduced. The depletion layer is very important to prevent the flow of electrons. Researchers have calculated that transistors smaller than 5 nm will not be able to stop electron flow due to tunneling of electrons in their depletion region. Due to tunneling, the electrons will not perceive the depleted area and directly "cross-wear". If the electron flow cannot be prevented, the transistor will fail.

In addition, we are slowly approaching the size of the atom itself. Theoretically we cannot build a transistor smaller than an atom. The diameter of the silicon atoms is about 1 nanometer, and the gate size of the transistors we manufacture now is about 10 times that size. Even if we don't consider the quantum effect, we will reach the physical limit of the transistor and cannot be smaller.

Dennard's Scaling-Dennard Scaling is considered a sister law of Moore's Law. It was developed by Robert Dennard in 1974 and states that as transistors become smaller, their power density will also decrease. This means that as transistors get smaller, the amount of voltage and current required to operate them will also decrease. This law allows manufacturers to reduce the size of transistors and increase clock speed through large jumps in each iteration. However, around 2007, Dennard's Scaling collapsed. This is because at smaller sizes, leakage currents can cause the transistors to heat up and cause further losses.

We may have noticed that although the transistors have become smaller, the CPU calculation rate has not increased in the past decade due to the collapse of Dennard Scaling. The high loss at high clock rates is also why smartphone chips use lower clock speeds (typically 1.5 GHz).

By improving the current chip implementation and having a better instruction pipeline, we can improve the performance of the chip. So Stanford professor Jonathan Cummer proposed Koomey's law: the number of calculations per joule of energy will double every 1.5 years. This situation is expected to continue until 2048, when Landauer's principles and simple laws of thermodynamics will prevent further improvements. Currently, Landauer Limits has a computer efficiency of approximately 0.00001%.

Traditional programming languages such as Java, C++, and Python can only be run on a single device. But as devices become smaller and cheaper, we can run the same program simultaneously or in parallel on many chips to further improve performance. In this regard, languages like Golang, Node will play a more important role.

Researchers around the world are looking for newer, more innovative ways to make smaller and faster transistors. Materials such as gallium nitride and graphene have been shown to have less loss at faster switching frequencies.

The most likely solution at present is to develop Quantum Computers. Companies like D-Wave and RigettiCompuTIng are working extensively in this area, and more importantly, the law of extending Qubits has not yet begun. The way to bypass Dennard Scaling is to place more cores in a single chip to improve performance. At present, quantum computing has shown great promise. Its advantage is that it can have multiple states at a time (unlike other computers 0,1). At present, some experimental quantum calculations have achieved good results, such as the true random number algorithm based on quantum technology has been successful.